Every June, something quietly seismic happens in the offices of university presidents and vice chancellors around the world. The annual league tables land. Institutions climb, stall, or slip. Communications teams mobilise. Strategy meetings are called. And students, governments, and funding bodies take note. A university ranking system has, over the past two decades, evolved from an academic curiosity into one of the most consequential forces shaping higher education policy, institutional investment, and student choice globally. Understanding what these systems actually measure, how they differ from one another, and where their limitations lie is no longer optional knowledge for education leaders. It is essential.

The global appetite for ranked higher education continues to grow. The QS World University Rankings 2025 evaluated over 1,500 universities across 106 countries and territories, making it the largest edition in its history. The Times Higher Education (THE) World University Rankings drew on 174.9 million citations and over 108,000 academic survey responses in its 2026 methodology. These are not marginal exercises in academic comparison. They are data-intensive systems with profound consequences for institutions, students, and entire national higher education strategies. School owners, university boards, and policymakers who engage with these systems thoughtfully are far better positioned than those who dismiss or misread them.

Read More: Joint Degree Programs in Nigeria: How Global Partnerships Expand Reach

Understanding the University Ranking System Landscape

A university ranking system, at its most basic, is a structured framework for evaluating and comparing higher education institutions against a set of defined performance indicators. The concept emerged formally in the early 2000s. The Academic Ranking of World Universities (ARWU), widely known as the Shanghai Ranking, was the earliest of the major global tables, first published in 2003 by Shanghai Jiao Tong University. Its original purpose was to measure Chinese universities against global peers. What it created was an entirely new global conversation about institutional performance.

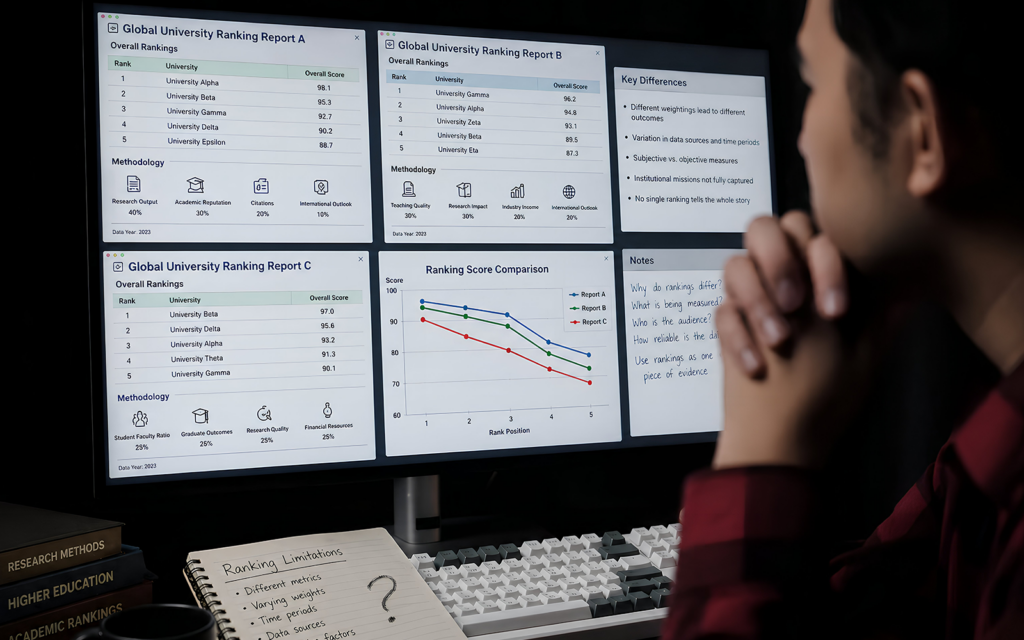

Today, the three most influential global university rankings are QS, THE, and ARWU. Each operates independently, uses different methodologies, and produces meaningfully different results. A university ranked in the global top 100 by one system may sit outside the top 200 in another. For institutions, this variance is not a flaw to be dismissed. It is a signal worth decoding.

What Each Major System Actually Measures

Each of the three dominant higher education rankings prioritises different evidence, which explains why their outputs diverge.

The QS World University Rankings, produced by Quacquarelli Symonds, organises performance across five broad lenses: research and discovery, employability and outcomes, learning experience, global engagement, and sustainability. Academic reputation carries the heaviest weight, based on surveys of over 151,000 academics in more than 140 countries. Employer reputation, citations per faculty, faculty-to-student ratio, international student and faculty ratios, and employment outcomes complete the picture. Critically, QS is the only major ranking to formally incorporate both sustainability and graduate employability into its core methodology.

Read More: Read More: Green EdTech: How Technology Can Make Education More Sustainable

The Times Higher Education Rankings place greater emphasis on research environment and citation impact. THE methodology draws on 18.7 million journal articles and related publications indexed by Elsevier’s Scopus database, with citations counted over a five-year publication window. Teaching environment, research quality, industry collaboration, and international outlook each contribute to the overall score. THE introduced a patents metric in 2023, measuring how often a university’s research is cited by patent filings globally, a recognition that knowledge transfer matters as much as knowledge creation.

The Academic Ranking of World Universities takes a deliberately different approach. ARWU uses six entirely objective, third-party indicators: alumni and staff winning Nobel Prizes and Fields Medals, highly cited researchers selected by Clarivate, articles published in Nature and Science, papers indexed in major citation databases, and per capita institutional performance. It does not use surveys, does not rely on institutional self-reporting, and does not attempt to measure teaching quality. Harvard University has topped the ARWU list for 23 consecutive years.

Why Ranking Criteria Produce Such Different Results

The divergence between systems is not accidental. It reflects fundamentally different beliefs about what universities are for. ARWU is unambiguously a measure of elite research output: it rewards institutions whose alumni and staff win the most prestigious scientific awards and publish in the most selective journals. This approach is transparent and reproducible, but it structurally favours large, research-intensive, English-language universities with long institutional histories.

QS and THE both attempt a broader view of institutional value, incorporating teaching, employability, and international diversity. But their weighting choices still shape outcomes significantly. The heavy reliance on reputational surveys in QS (academic reputation alone accounts for 30% of the overall score) means that brand recognition plays an outsized role. Newer institutions, or those in regions with less historical presence in academic networks, face a structural disadvantage regardless of their actual educational quality.

These differences in ranking criteria for universities mean that the same institution can simultaneously be considered world-class by one framework and middling by another. University leaders who understand this dynamic can use it strategically rather than feeling subject to it.

The Limitations Every Institution Should Understand

A university ranking system is a useful instrument, but not a comprehensive one. Several well-documented limitations deserve serious attention from education decision-makers.

The most persistent critique is the overemphasis on research output at the expense of teaching quality. As noted by Wikipedia’s synthesis of global ranking scholarship, all three major systems primarily measure research performance, and none adequately captures the quality of the learning experience a student receives. A university can be an outstanding teaching institution with poor rankings, and vice versa.

Structural bias towards size and language compounds this problem. ARWU’s reliance on Nobel Prizes and citations in English-language journals systematically disadvantages institutions in the humanities, social sciences, and non-Anglophone academic traditions. The European Commission has criticised ARWU on precisely these grounds, and the same concern applies, to varying degrees, across all three systems.

Data reporting inconsistencies also introduce noise. Institutions that invest in data management and submission processes score better not merely because they perform better, but because their performance is better documented. Smaller or under-resourced institutions may be systematically under-measured.

Finally, the OECD and a growing number of higher education researchers have raised concerns about the behavioural consequences of rankings: institutions may optimise for the metrics rather than for genuine educational outcomes, a form of institutional goal displacement with real consequences for students.

Read More: Why Online Learning Platforms Are Dominating the Global Education Market

How Rankings Shape Institutional Strategy

Despite these limitations, the strategic impact of a university ranking system on an institution is undeniable. Three consequences are especially significant for university boards and senior leaders.

Student recruitment is directly affected. Prospective students, particularly those navigating the international market, routinely use rankings as a filter. A movement of 20 places in QS can translate into measurable shifts in application volumes, particularly from high-growth student markets in Asia, Africa, and the Middle East.

Funding and partnerships follow ranking signals closely. Governments allocate research funding, philanthropists direct endowments, and industry partners select collaboration institutions partly based on ranked prestige. Higher education rankings function, in this sense, as a proxy for institutional credibility in negotiations where detailed knowledge of academic quality is scarce.

Global partnerships and staff recruitment are also influenced. The most talented academic researchers and senior administrators consider institutional ranking when choosing where to work. An institution declining in the tables faces compounding effects: reputational damage reduces recruitment quality, which in turn affects research output, which further affects the ranking. Understanding this cycle is the first step to breaking it.

What Institutions Can Do to Strengthen Their Position

Improving performance within a university ranking system requires a deliberate, multi-year strategy rather than short-term optimisation. The most effective institutional responses tend to focus on four areas.

- Research output and citation visibility: Investing in open-access publishing, international research collaborations, and high-impact journal submissions directly improves citation metrics across all three systems.

- Data governance and reporting: Ensuring that institutional data is accurate, complete, and submitted through the correct channels prevents under-representation in rankings that rely on institutional submissions.

- International faculty and student recruitment: Improving the international ratios that QS and THE weight directly requires sustained investment in global recruitment pipelines and support infrastructure.

- Graduate outcomes tracking: As employability metrics gain weight in global university rankings, institutions that systematically track and evidence graduate career outcomes will hold a growing advantage.

Platforms such as Edutech Global support institutions in managing the data systems, student lifecycle processes, and operational infrastructure that underpin sustainable ranking improvement. The Edutech Global blog regularly explores how technology and strategy intersect in higher education management.

Where University Ranking Systems Are Heading

The university ranking system of 2030 will look meaningfully different from that of today. Several trends are already reshaping the field. Sustainability metrics are becoming standard, following QS’s lead in incorporating environmental and social responsibility indicators. Graduate employability is gaining weight as governments and students demand clearer evidence of return on educational investment. The OECD has long advocated for ranking frameworks that better reflect teaching quality and equity of access, and that pressure is translating, slowly, into methodological evolution.

Data-driven, real-time evaluation models are also emerging. As institutions generate richer longitudinal data on student outcomes, the static annual survey may give way to more dynamic, evidence-based assessments of institutional performance.

For university boards, school owners, and education policymakers, the lesson is consistent: rankings reward what institutions actually do well, over time, and at scale. Engaging with the university ranking system critically, strategically, and honestly is not a concession to external pressure. It is a mark of institutional maturity.